3D Gaussian Splatting (3DGS) is a 2-year-old technique that can produce 3D worlds from either a video or a few hundred images of a location.

When you view the 3D world, objects change colour, size and transparency based on their location to the camera's position to appear more realistic than they would otherwise.

These worlds can be viewed at 100+ fps on modern hardware.

They also generate much faster than NeRFs and are much more realistic than previous photogrammetry techniques.

There are Web UIs that can be used to edit and optimise 3D Gaussian Splats after they've been initially generated.

The team behind the "3D Gaussian Splatting for Real-Time Radiance Field Rendering" paper published a reference implementation that can both build and render 3D Gaussian Splats. Somehow all of this functionality is made up of only 3K lines of Python.

There are three major approaches / profiles for building 3D Gaussian Splats.

ADC (Adaptive Density Control), which is non-computationally-intensive, builds scenes from the inside-out, produces small file sizes and is best suited to environments rather than objects.

MCMC (Markov Chain Monte Carlo) is a newer, computationally-intensive profile that uses a probabilistic model and builds scenes from the outside-in. MCMC is better suited for objects rather than environments.

Splat3 is the latest profile and uses an inside-out approach. It also allows for controlling the number of splats that will be produced.

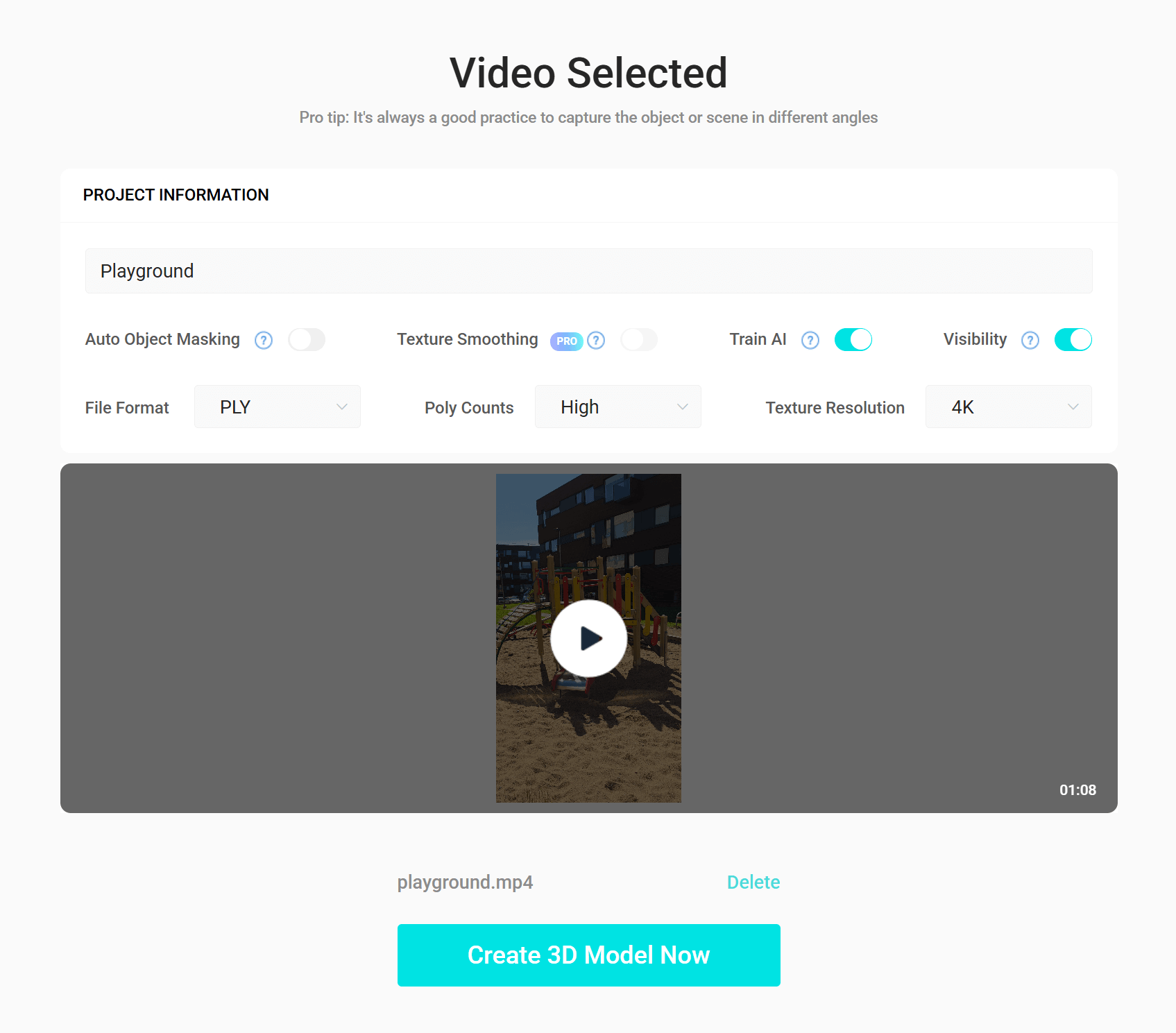

In this post, I'll take a 68-second video I recorded with my phone and build 3D Gaussian Splats using three web-based tools: Luma AI (ADC-based), Teleport by Varjo (MCMC-based) and KIRI Engine's web offering (MCMC-based).

I was going to explore Jawset's Postshot which is Splat3-based but it requires an Nvidia RTX 2080 or better. At the moment, I'm still using an Nvidia GTX 1080 which doesn't support ray tracing or tensor cores.

My Workstation

I'm using a 5.7 GHz AMD Ryzen 9 9950X CPU. It has 16 cores and 32 threads and 1.2 MB of L1, 16 MB of L2 and 64 MB of L3 cache. It has a liquid cooler attached and is housed in a spacious, full-sized Cooler Master HAF 700 computer case.

The system has 96 GB of DDR5 RAM clocked at 4,800 MT/s and a 5th-generation, Crucial T700 4 TB NVMe M.2 SSD which can read at speeds up to 12,400 MB/s. There is a heatsink on the SSD to help keep its temperature down. This is my system's C drive.

The system is powered by a 1,200-watt, fully modular Corsair Power Supply and is sat on an ASRock X870E Nova 90 Motherboard.

I'm running Ubuntu 24 LTS via Microsoft's Ubuntu for Windows on Windows 11 Pro. In case you're wondering why I don't run a Linux-based desktop as my primary work environment, I'm still using an Nvidia GTX 1080 GPU which has better driver support on Windows and I use ArcGIS Pro from time to time which only supports Windows natively.

Installing Prerequisites

I'm running Blender 4.4, Unreal Engine 5.5.4, Esri's ArcGIS Pro 3.5 and AutoCAD 2023.

I'll use FFMPEG and jq to help analyse the video and data in this post.

$ sudo apt update

$ sudo apt install \

jq \

ffmpeg \

unzip

I'll also use QGIS version 3.42. QGIS is a desktop application that runs on Windows, macOS and Linux. The application has grown in popularity in recent years and has ~15M application launches from users all around the world each month.

Source Footage

I've used my Samsung S25 Ultra to capture a 341 MB MP4 file. The video is 60 fps, vertical, in 4K and HEVC-encoded. Below is a low-resolution, 0.5 fps preview.

$ ffmpeg \

-i playground.mp4 \

-vf "fps=0.5,scale=-1:480:flags=lanczos,split[s0][s1];[s0]palettegen[p];[s1][p]paletteuse" \

-loop 0 \

preview.gif

This is the video stream's metadata.

$ video_codec () {

ffprobe -v error \

-hide_banner \

-print_format json \

-show_streams $1 \

| jq .streams \

| jq -S 'first(.[] | if .codec_type == "video" then . else empty end)' \

| jq 'del(.disposition)'

}

$ video_codec playground.mp4

{

"avg_frame_rate": "60630000/1010573",

"bit_rate": "42073776",

"chroma_location": "left",

"closed_captions": 0,

"codec_long_name": "H.265 / HEVC (High Efficiency Video Coding)",

"codec_name": "hevc",

"codec_tag": "0x31637668",

"codec_tag_string": "hvc1",

"codec_type": "video",

"coded_height": 2176,

"coded_width": 3840,

"color_primaries": "bt709",

"color_range": "tv",

"color_space": "bt709",

"color_transfer": "bt709",

"duration": "67.371533",

"duration_ts": 6063438,

"extradata_size": 159,

"film_grain": 0,

"has_b_frames": 3,

"height": 2160,

"id": "0x1",

"index": 0,

"level": 153,

"nb_frames": "4042",

"pix_fmt": "yuv420p",

"profile": "Main",

"r_frame_rate": "60/1",

"refs": 1,

"side_data_list": [

{

"displaymatrix": "\n00000000: 0 65536 0\n00000001: -65536 0 0\n00000002: 0 0 1073741824\n",

"rotation": -90,

"side_data_type": "Display Matrix"

}

],

"start_pts": 80100,

"start_time": "0.890000",

"tags": {

"handler_name": "VideoHandle",

"language": "eng",

"vendor_id": "[0][0][0][0]"

},

"time_base": "1/90000",

"width": 3840

}

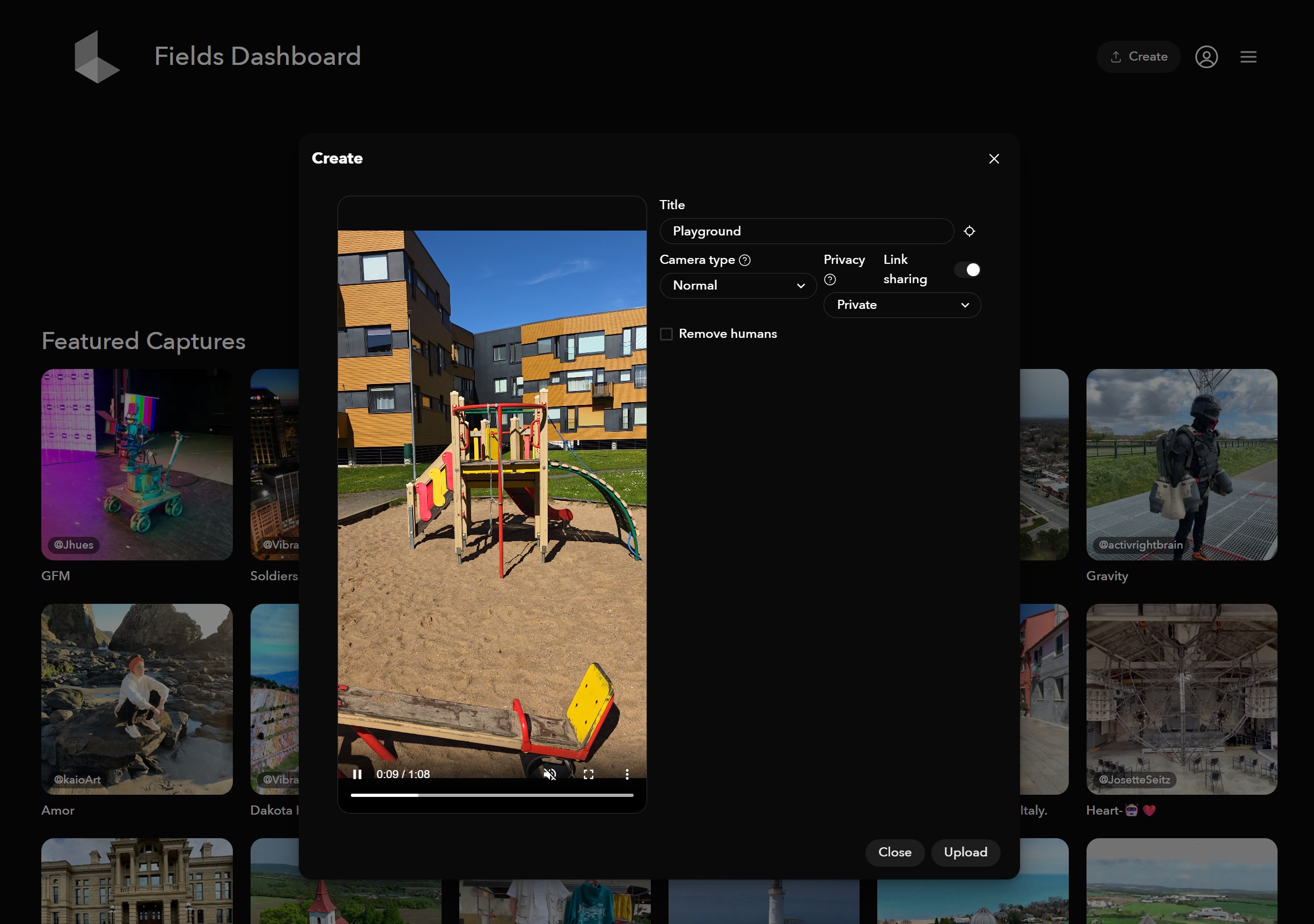

Luma AI

Luma took an hour and 45 minutes to turn the video into a 3DGS.

Once completed, I was able to download a large number of files they produced as well as view the 3DGS in their Web UI.

The source footage only captured imagery from eye-level. Anything viewed at this level looks great and details like the ropes hanging off the playground look incredibly life-like.

I didn't capture any imagery from above or below the playground so as soon as I change the vertical viewing position things begin to look off.

This is the scene from a birds-eye-view.

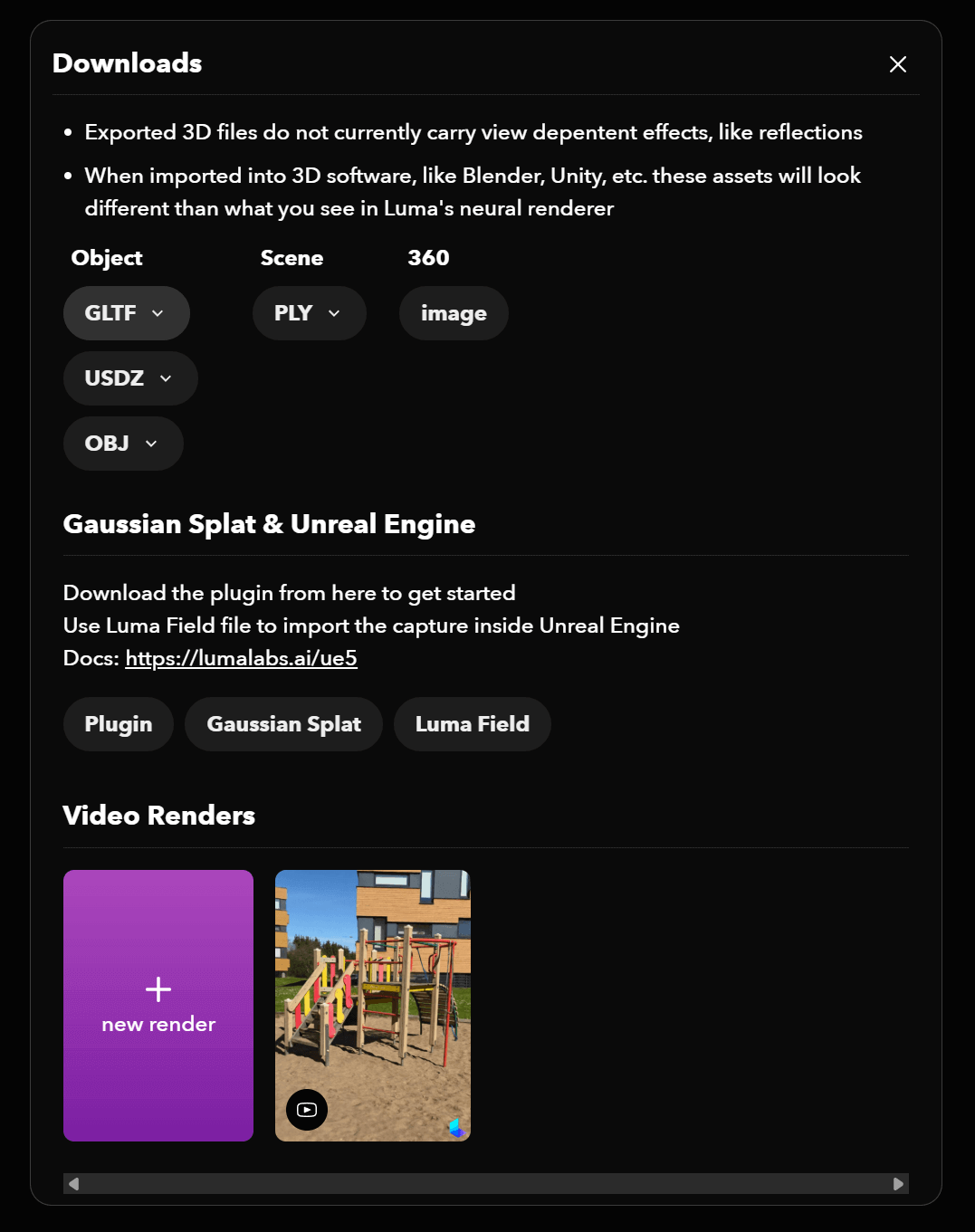

Luma's Deliverables

Luma generated a 20-second video of the 3DGS which came out at 8.5 MB MP4 file.

There were two "scene" PLY files, one with a point cloud at 36 MB and a full mesh at 62 MB.

There was a 360-degree JPG at 988 KB.

I also got three textured-mesh, high-polygon-count object deliverables. These came as a GLB file at 47 MB, USDZ file at 37 MB and a 117 MB ZIP file containing an OBJ file, an MTL file and 126 PNGs.

$ unzip -l Playground_textured_mesh_obj.zip

Archive: Playground_textured_mesh_obj.zip

Length Date Time Name

--------- ---------- ----- ----

108695337 2025-05-19 12:40 mesh.obj

23562 2025-05-19 12:40 mesh.mtl

836037 2025-05-19 12:40 textures/mesh_material0000_map_Kd.png

263943 2025-05-19 12:40 textures/mesh_material0001_map_Kd.png

269190 2025-05-19 12:40 textures/mesh_material0002_map_Kd.png

...

87949 2025-05-19 12:40 textures/mesh_material0124_map_Kd.png

9099 2025-05-19 12:40 textures/mesh_material0125_map_Kd.png

--------- -------

119830497 128 files

The USDZ file is a ZIP file containing a Universal Scene Description. Pixar, Adobe, Apple, Autodesk and Nvidia formed the OpenUSD Alliance a few years back in an attempt to create file formats that work between different creative software suites with fewer compatibility issues.

$ unzip -l Playground_textured_mesh_usdz.usdz

Archive: Playground_textured_mesh_usdz.usdz

Length Date Time Name

--------- ---------- ----- ----

32330638 2025-05-19 12:40 textured_mesh_usdz.usdc

322582 2025-05-19 12:40 0/image0.jpg

118743 2025-05-19 12:40 0/image1.jpg

119203 2025-05-19 12:40 0/image2.jpg

...

44154 2025-05-19 12:40 0/image123.jpg

30852 2025-05-19 12:40 0/image124.jpg

8460 2025-05-19 12:40 0/image125.jpg

--------- -------

38297768 127 files

There was also a 241 MB ZIP file containing a 250 MB PLY file and a 56 MB Luma Field file intended for use in Unreal Engine 5 with Luma's 3DGS Plugin.

This PLY file contains a large number of fields which allow 3DGS viewers to re-calculate any one gaussian's colour, size and transparency as the camera changes its position towards it.

$ unzip -p Playground_gaussian_splatting_point_cloud.ply.zip \

gs_Playground.ply \

| strings \

| head -n65

format binary_little_endian 1.0

element vertex 1105480

property float x

property float y

property float z

property float nxx

property float ny

property float nz

property float f_dc_0

property float f_dc_1

property float f_dc_2

property float f_rest_0

property float f_rest_1

property float f_rest_2

...

property float f_rest_42

property float f_rest_43

property float f_rest_44

property float opacity

property float scale_0

property float scale_1

property float scale_2

property float rot_0

property float rot_1

property float rot_2

property float rot_3

end_header

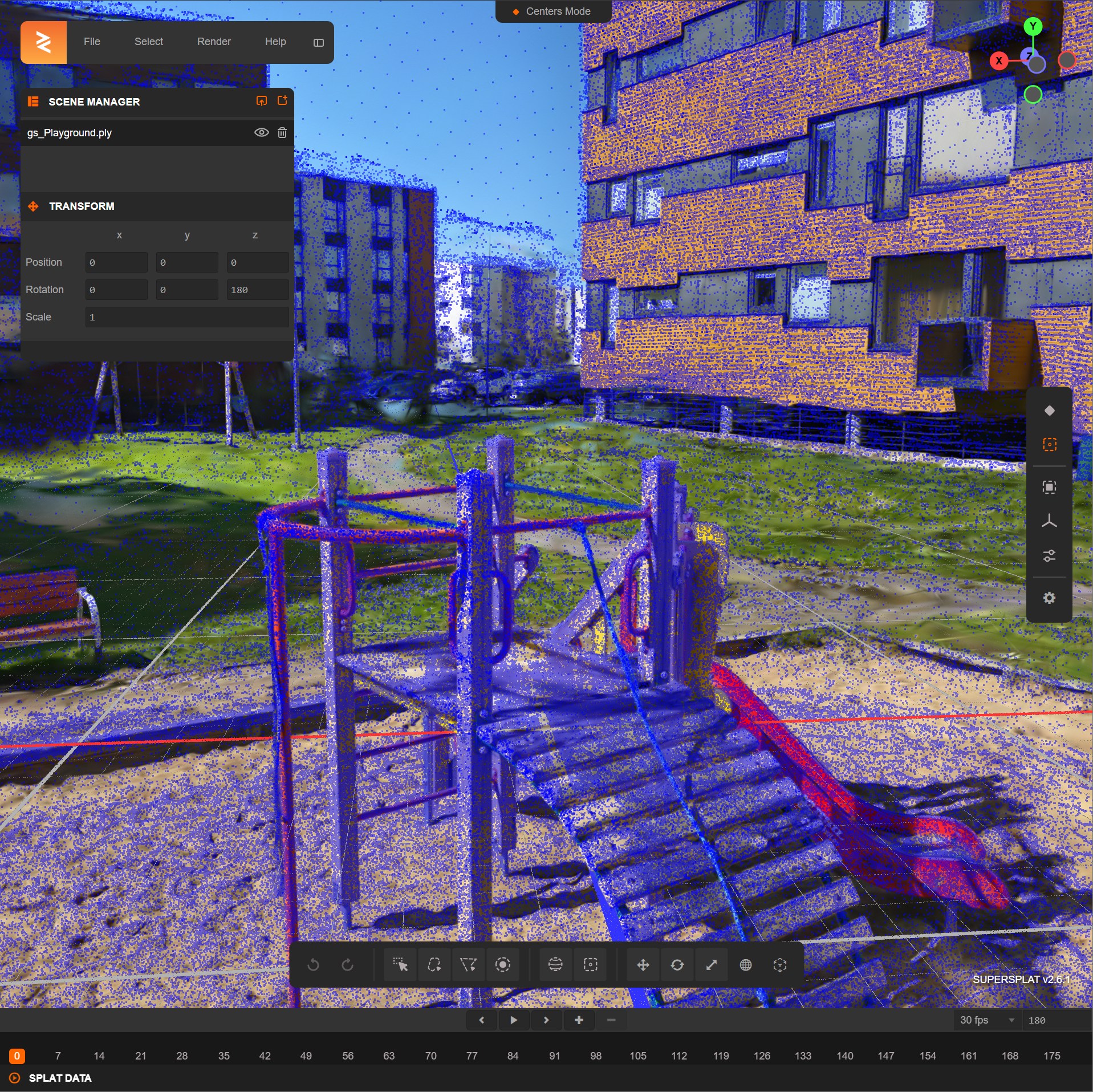

SuperSplat's Editor

The SuperSplat Editor supports editing and exporting Compressed PLY files. I've seen this tool reduce file sizes by up to 99%. Luma's PLY file loads without issue.

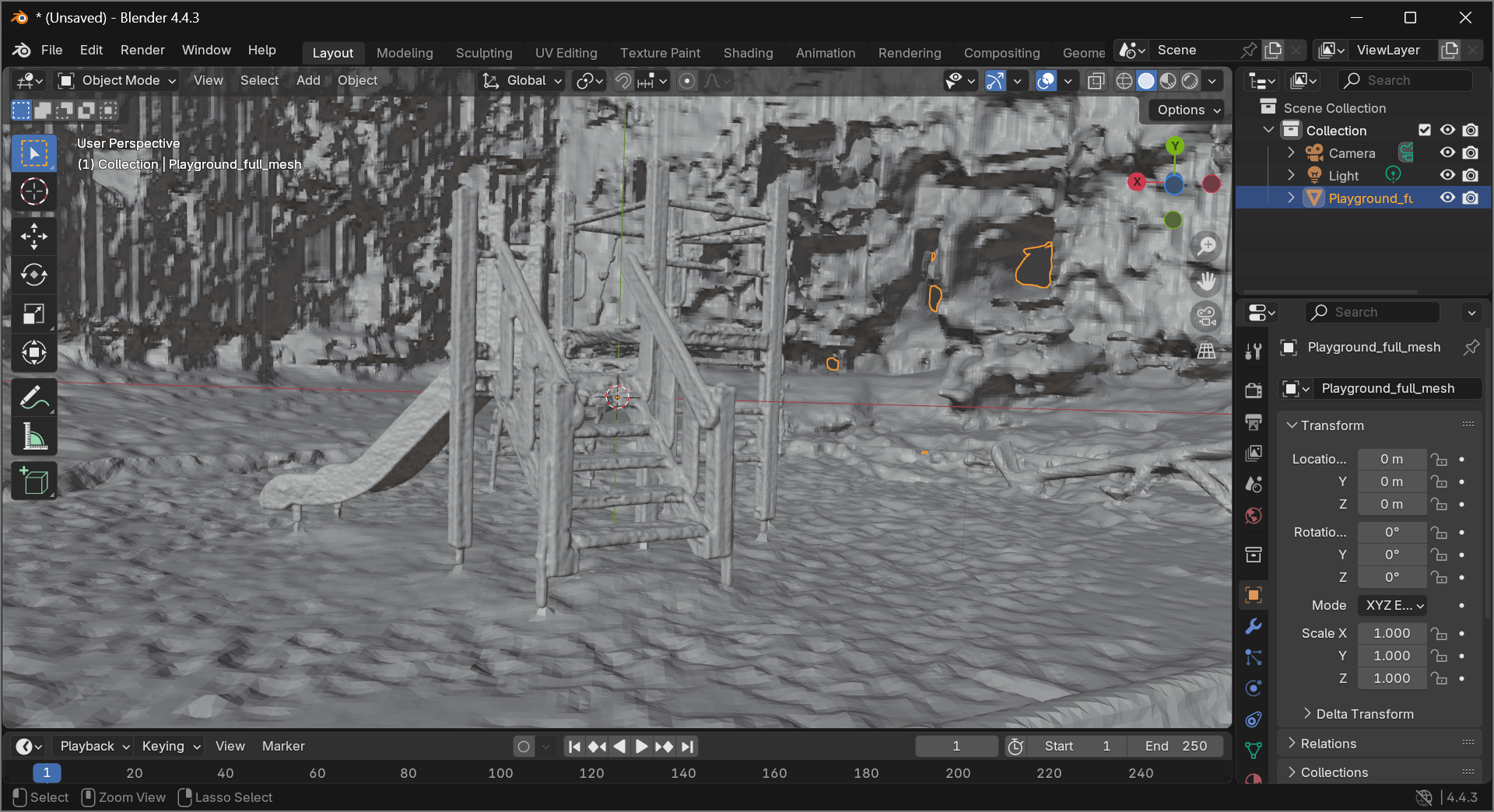

Luma's PLYs in Blender

All of Luma's PLY files can be dropped into a Blender scene without issue.

Below is the full mesh.

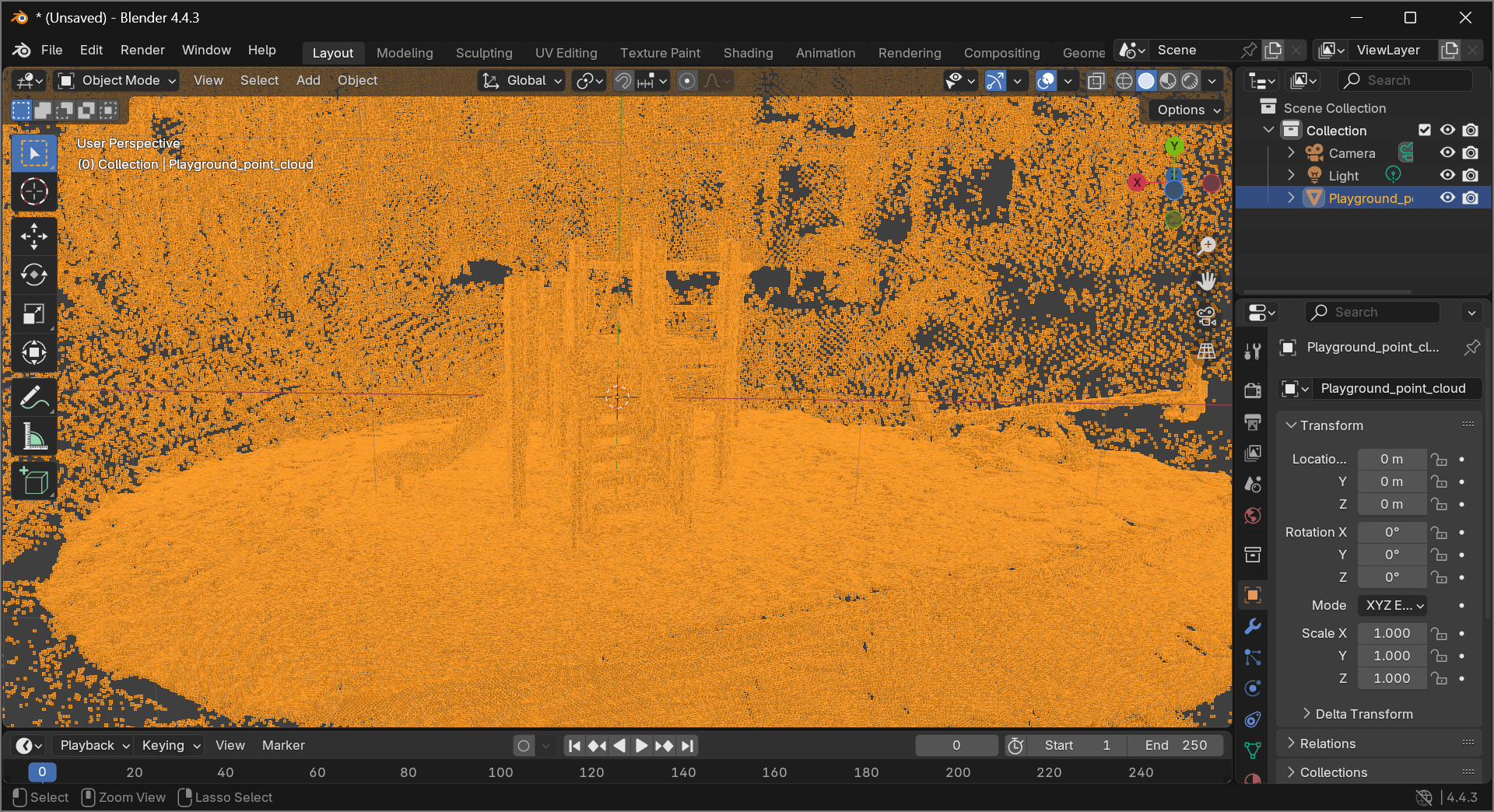

Below is the point cloud.

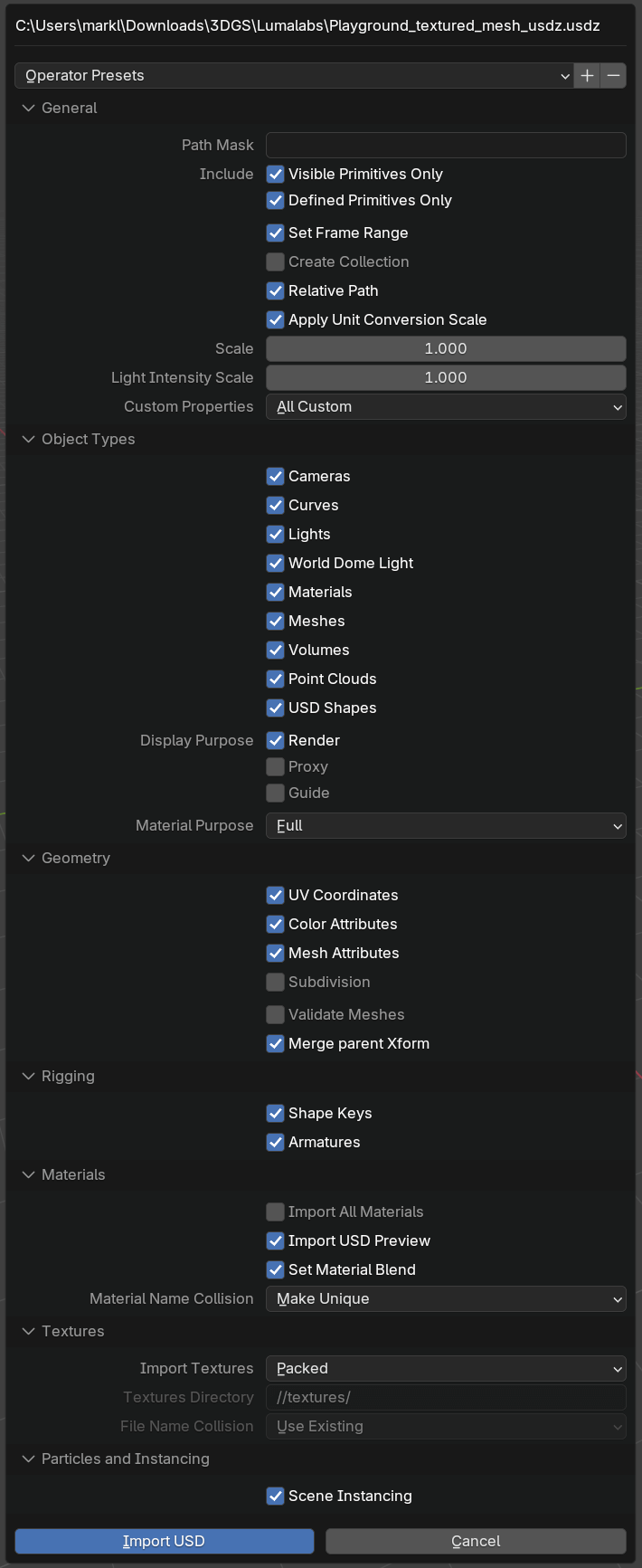

The USDZ file offers a large number of options when importing.

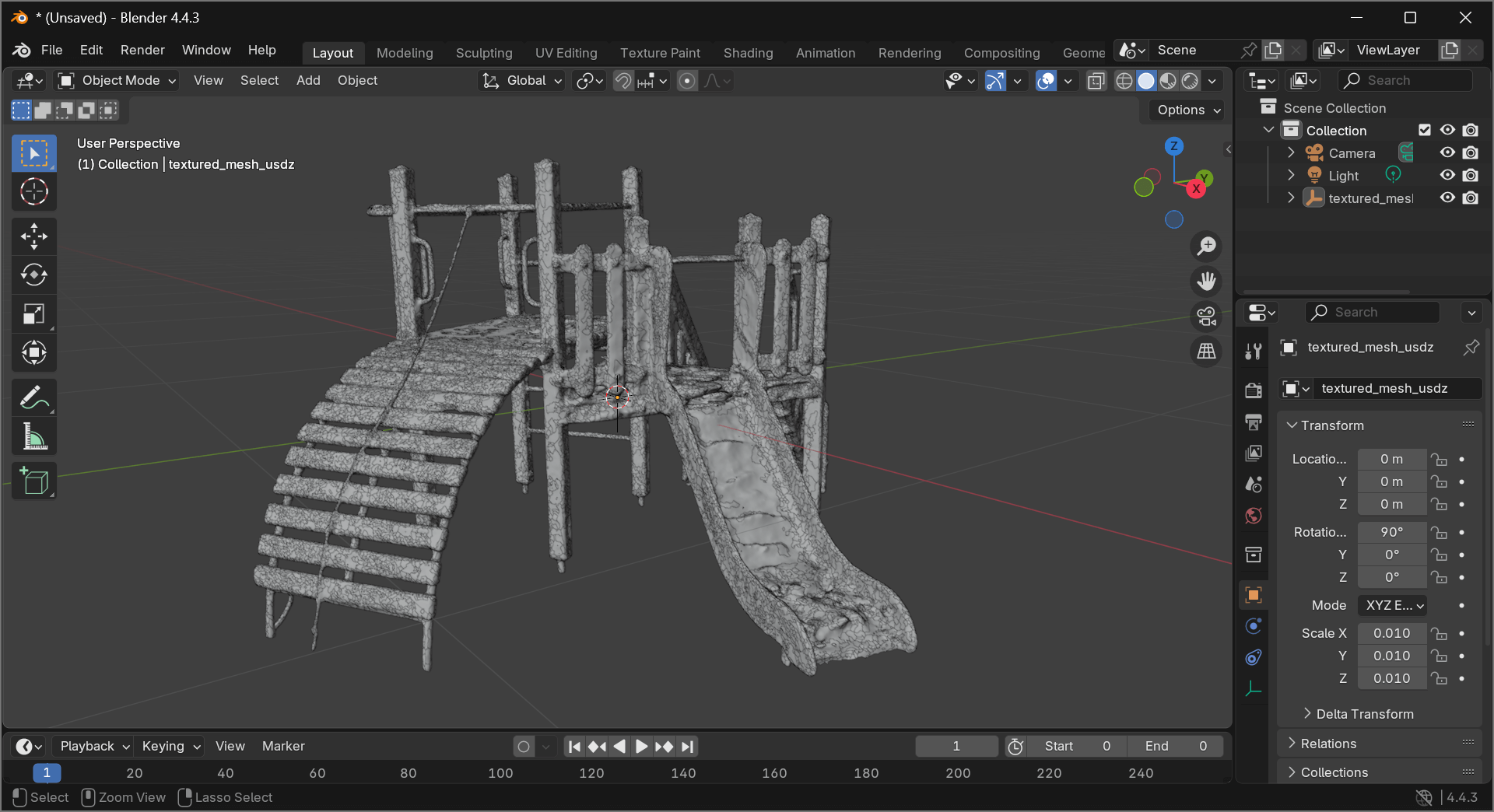

The resulting import only shows the playground, not the buildings in the background.

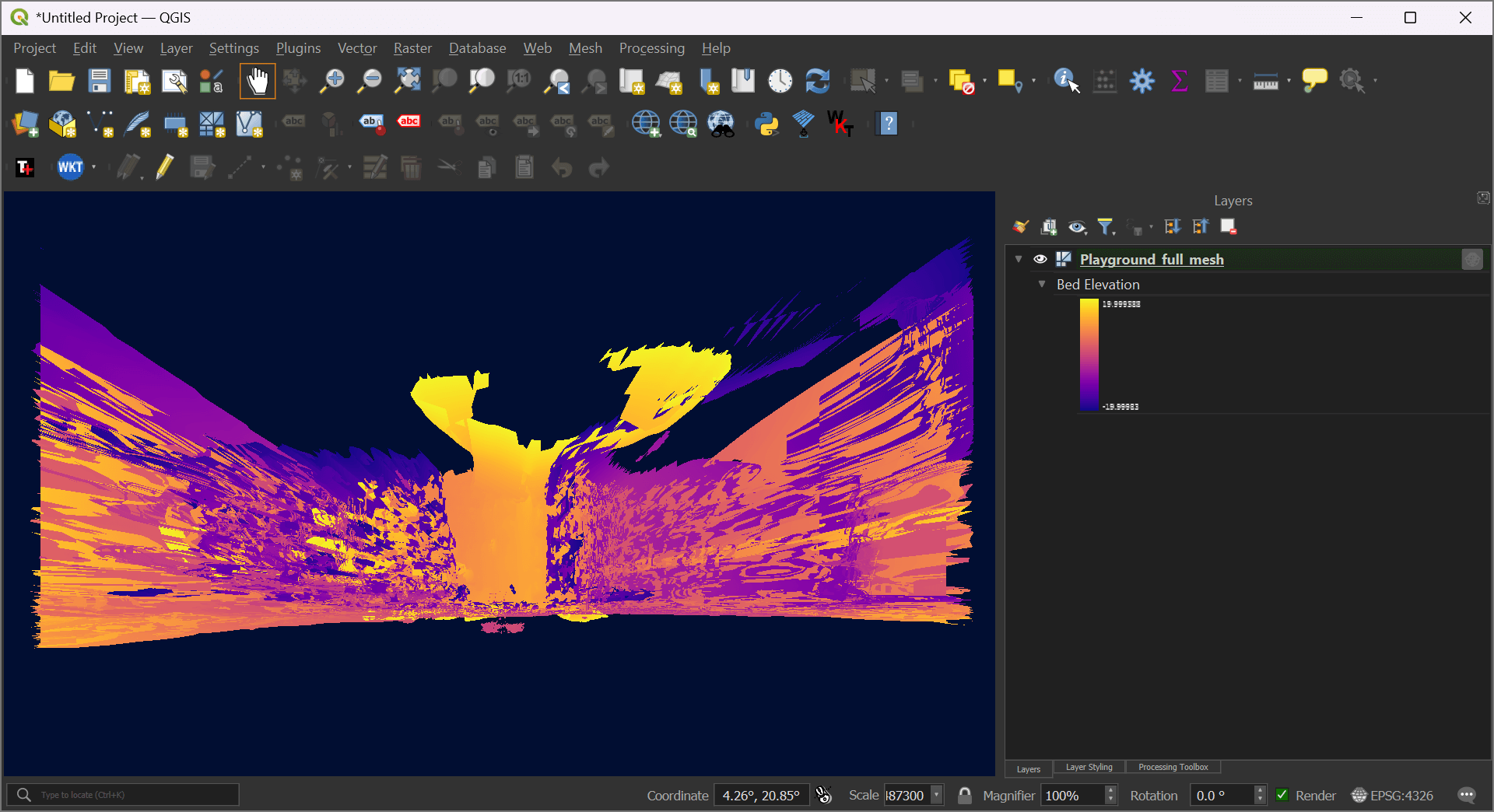

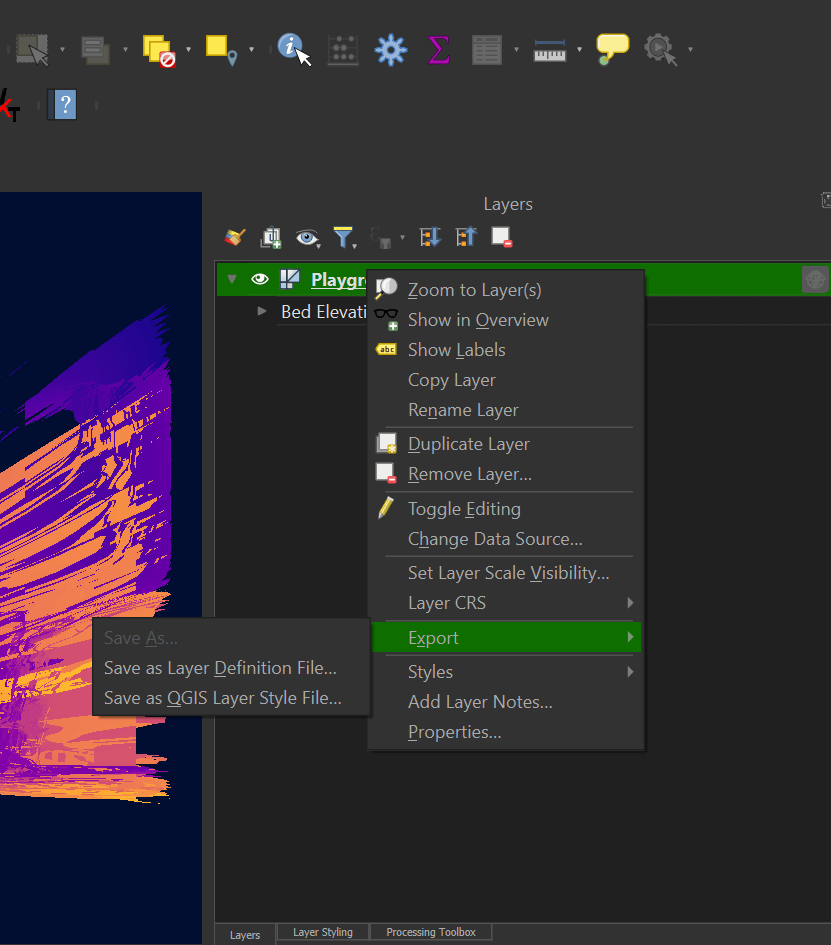

Luma's PLYs in QGIS

The mesh PLY file can be dropped into a QGIS scene and will load automatically.

I need to circle back to this and figure out how to georeference the mesh to its real world location.

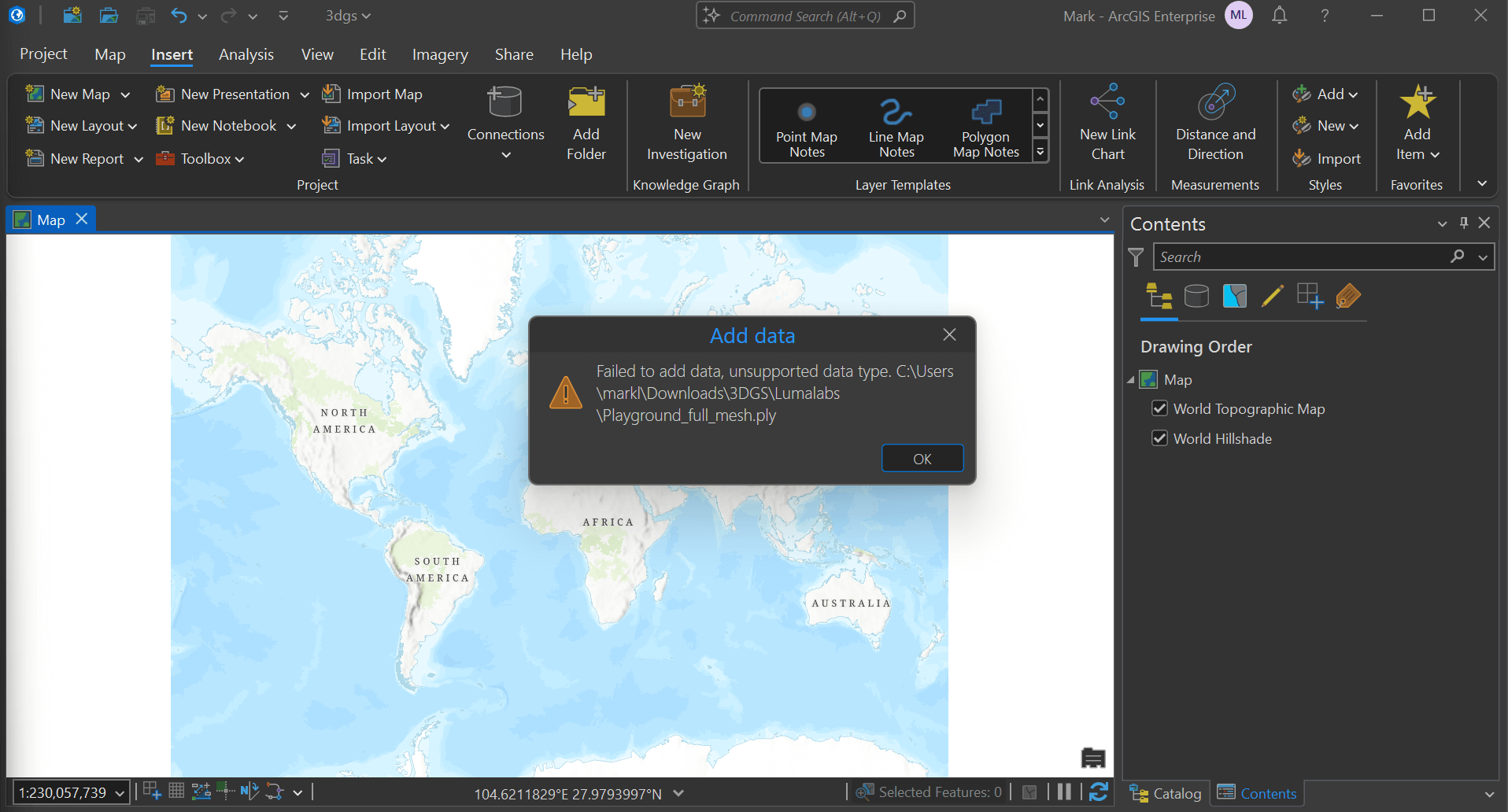

ArcGIS Pro & AutoCAD

Esri's ArcGIS Pro 3.5 was released last week and there were mentions of more point cloud support but as of this writing, the PLY files can't be dropped into a scene without issue.

PLY files aren't natively supported by AutoCAD either. Commercial add-ons are needed to import these files.

I came across people suggesting to convert the PLY file into a DWG file in order to get them to open in AutoCAD. I was hoping QGIS had some built-in functionality to export the full mesh as a DWG file but export functionality isn't available as of this writing.

I'll have to circle back to these tool's 3DGS support in a future post.

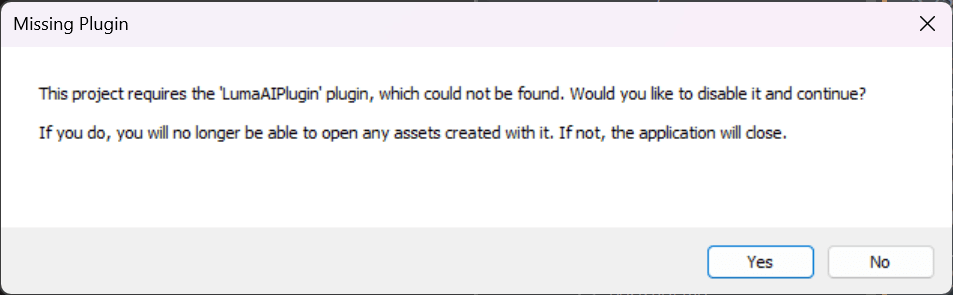

Unreal Engine 5

I have Unreal Engine 5.5.4 installed and version 0.41 of Luma's plug-in looks to be installed but when I tried to load their 3DGS starter project which is based on Unreal's "cinematic film" template, I was greeted with this error message.

I suspect some more troubleshooting should resolve this issue as I've seen plenty of people on YouTube get this working.

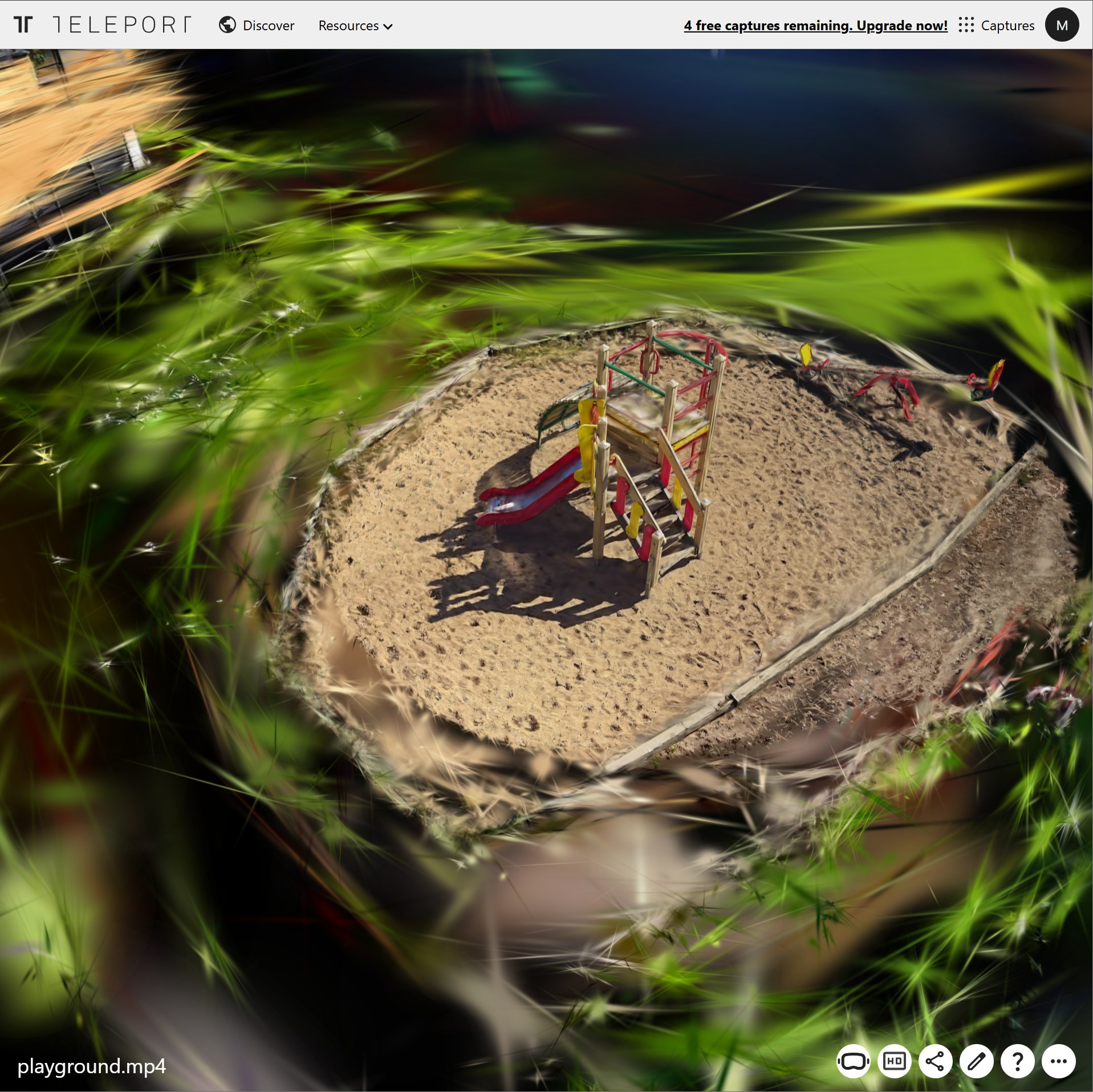

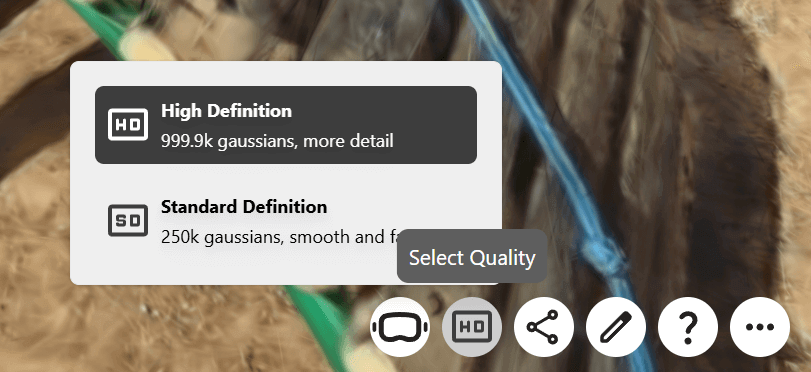

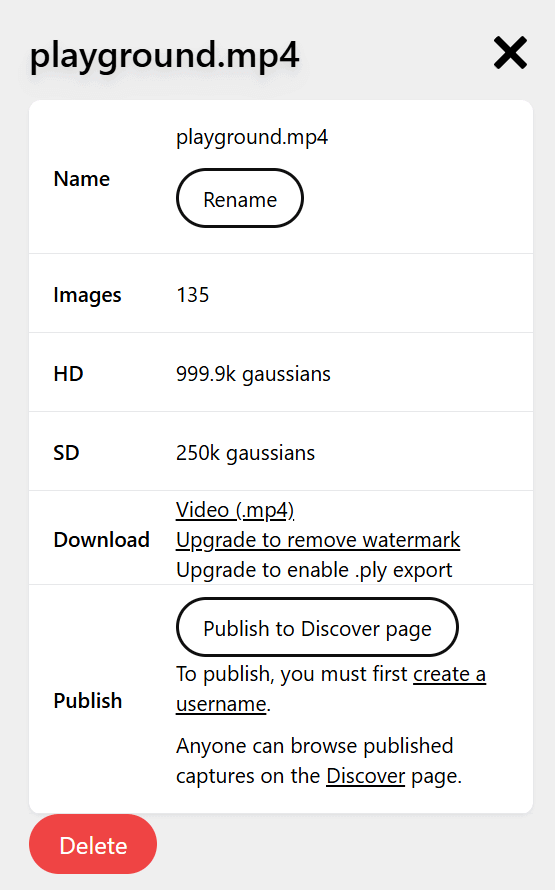

Teleport

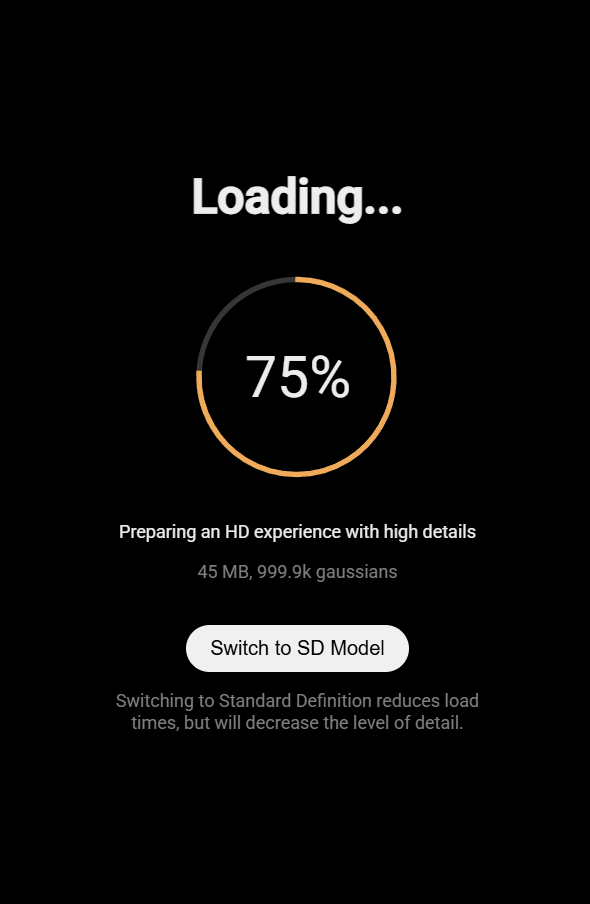

Teleport took an hour and 32 minutes to generate a 999.9K-gaussian 3DGS of the video I captured.

The playground at first glance looked pretty good but the buildings in the background are missing a lot of detail.

Looking closer at one of the ropes on the playground, it's clear the quality of the 3DGS leaves a lot to be desired.

The birds-eye-view of the scene is pretty chaotic outside of the playground itself.

With their free trial, I was only able to download an MP4 of the 3DGS, not any other underlying deliverables.

Kiri Engine

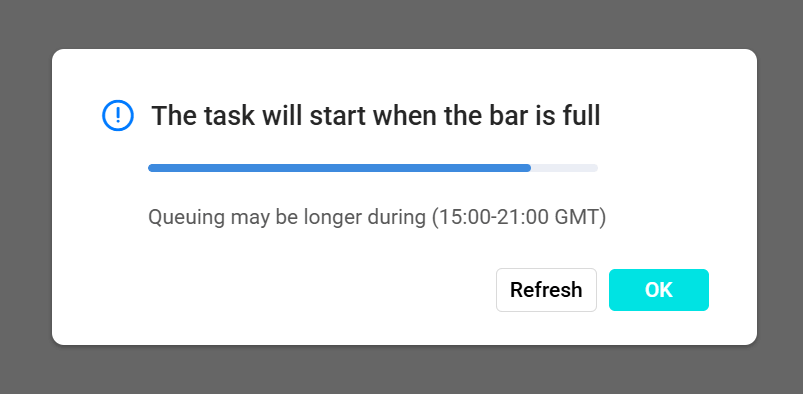

Kiri Engine only took 21 minutes to generate a 3DGS.

Their progress UI was helpful and pretty specific.

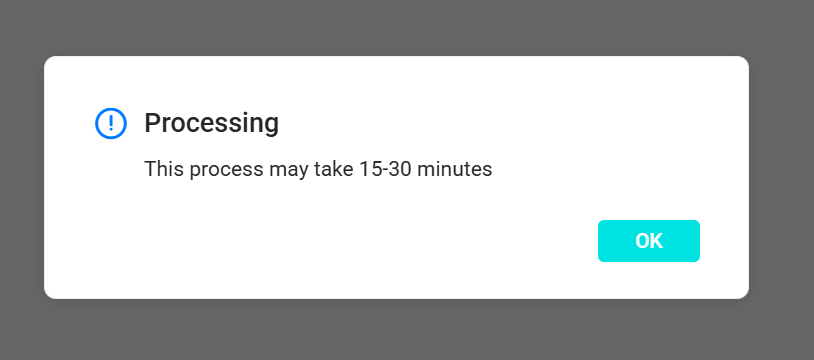

The resulting scene cut out all of the surrounding environment.

This is the birds-eye-view of the playground. I suspect much more footage from above would be needed to help fill in the gaps.

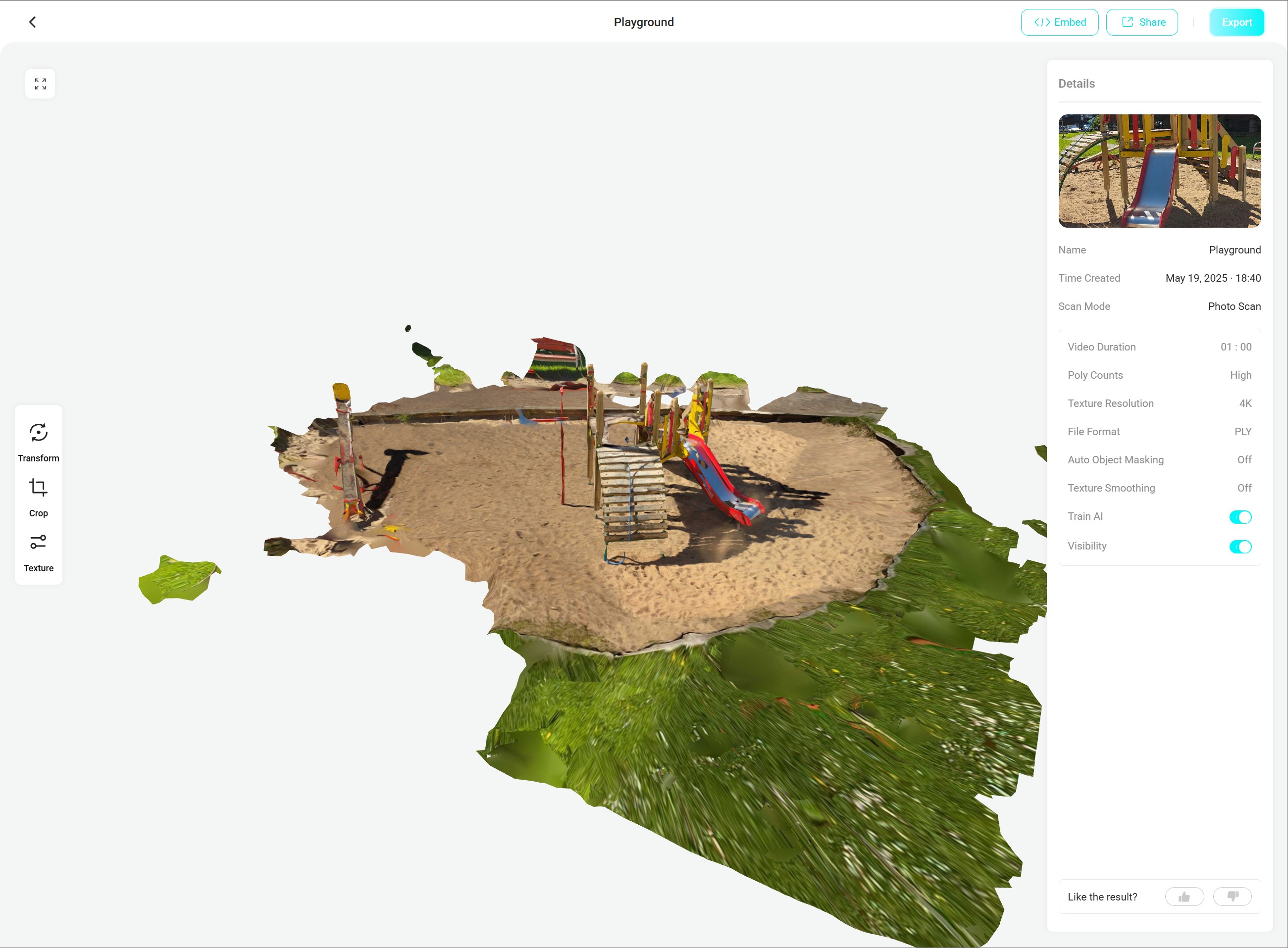

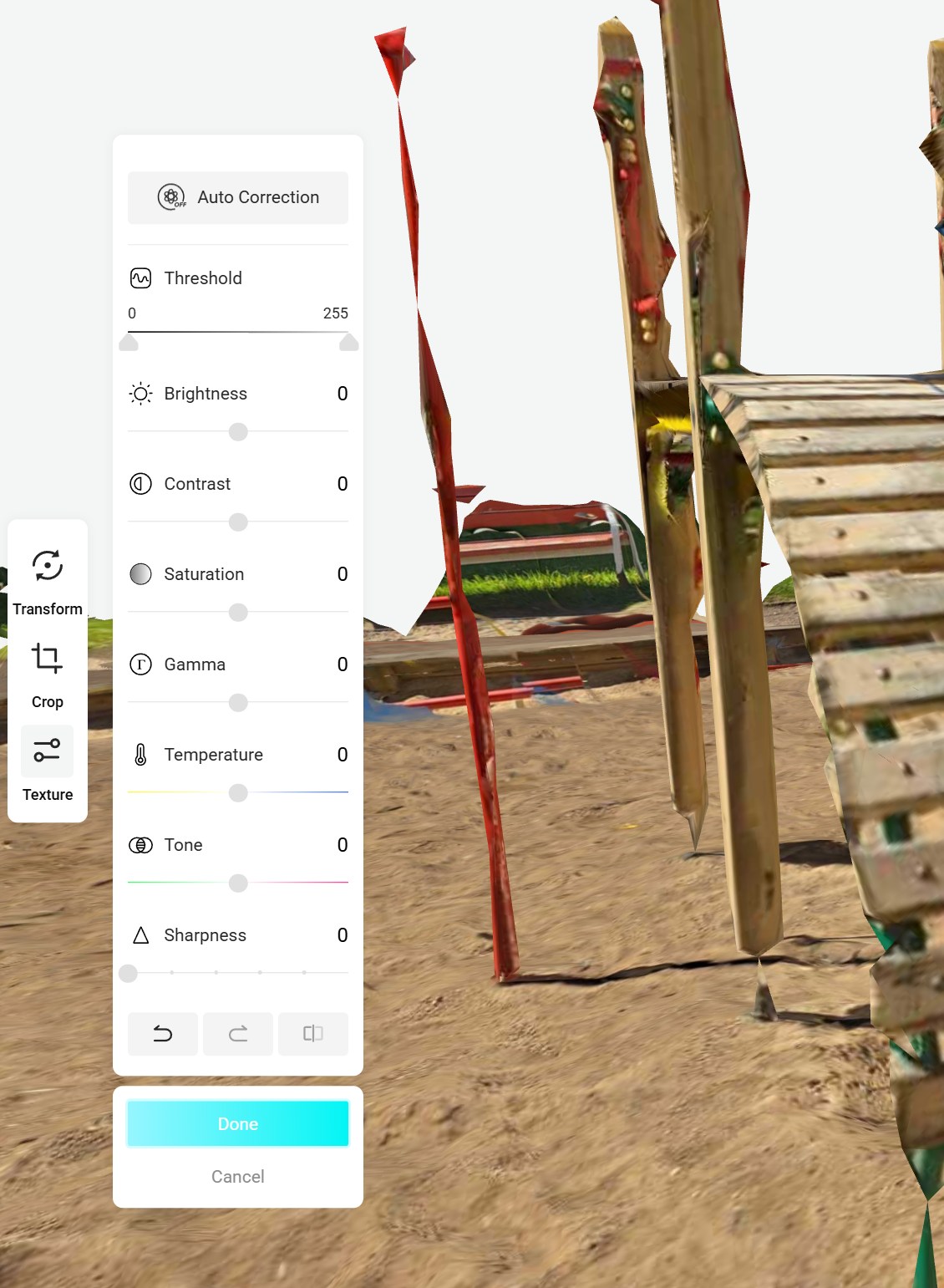

Their Web UI has some basic editing features.

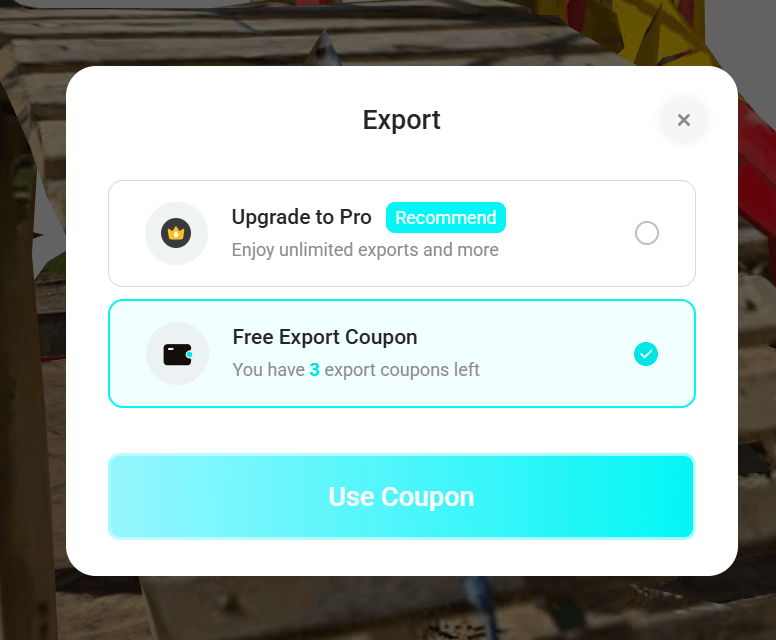

The free trial includes three export "coupons".

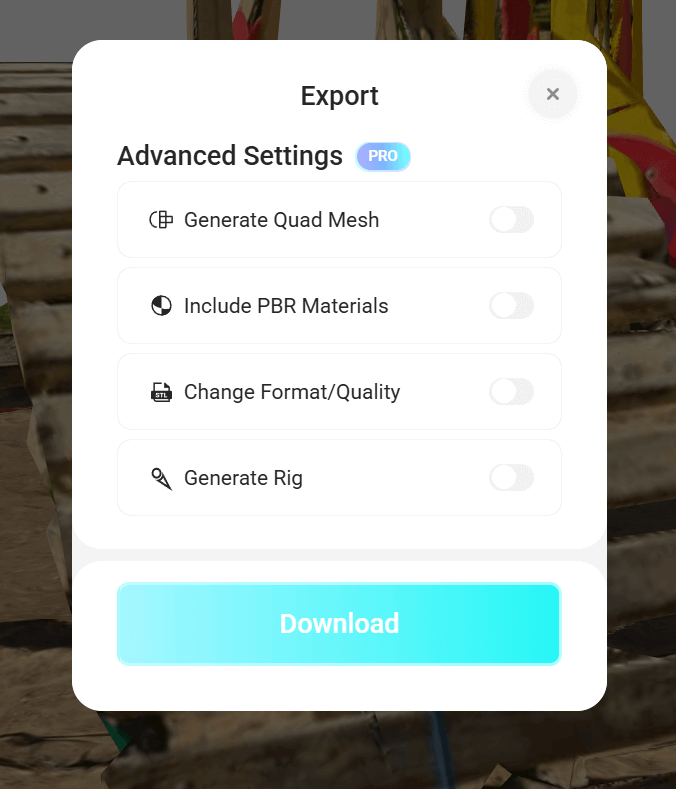

I was able to download a high-polygon PLY file at 442 MB as well as a JPEG texture map.

Below is the texture map.